Article • 3 min read

What really builds trust in AI-powered experiences?

Trust is hard-earned and easily lost—especially as AI powers more of the service experience.

Béatrice Moissinac

AI Security - Principal Security Engineer at Zendesk

Zuletzt aktualisiert: August 28, 2025

AI is transforming customer and employee service, but too often it’s being deployed without the policies and safeguards needed to sustain trust. And when trust is broken, doubt spreads quickly: customers lose confidence, agents disengage, and leaders hesitate to scale.

On the one hand, users increasingly see the value AI brings. In fact, 61% of customers now expect it to deliver more personalized service. Yet one wrong answer or one confusing interaction can quickly erode this confidence. Before you know it, momentum stalls.

Change is happening quickly and organizations will fall behind if they aren’t taking the time to understand and mitigate potential AI risks. Too many still think that a disclaimer or generic privacy pop-up will do the trick—it won’t. To design for trust, you must build safeguards into every layer of the service experience.

What is AI governance?

AI governance refers to the policies and practices that guide the responsible, ethical, and safe deployment and use of AI systems. This includes protecting customer data, giving customers control over when and how they use AI, providing for transparency and explainability, and staying updated with global regulatory developments.

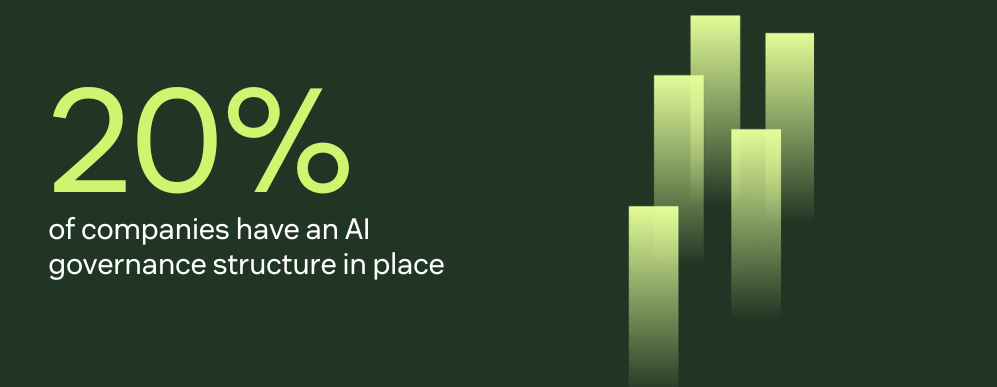

Establishing a strong AI governance structure is a critical first step. However, according to Gartner, only 20% of companies currently have one in place today, while 65% are still in the early stages of planning. Lack of governance is one of the biggest risks to AI adoption—and the biggest barrier to customer confidence.

Without immediate and meaningful progress here, the gap between what users expect and what companies deliver will only widen. And it will slow teams’ ability to deploy AI that’s truly in service of customers and employees.

Read more about the AI trust gap in Zendesk’s recent report.

Trust by design: 5 ways to deploy AI responsibly

Trust is earned when users have confidence in AI’s foundations—everything that makes it feel safe, helpful, and reliable to those using it. When evaluating AI solutions, leaders should ask: is the system safeguarding customer data? Is it protected against misuse? Can agents stay in the loop?

At Zendesk, we believe the best way to tackle risk is to understand it—and to design for trust from the ground up. We’ve woven five core governance principles into every layer of our AI to ensure users feel respected, protected, and in control. These include:

- Transparency: Customers and agents should always know when AI is being used, how it reached a decision, and what information it relied on. Zendesk labels AI outputs so users always know when AI is involved.

- Control: Users must be able to stay in the loop, review outputs, and override or disable AI as needed. Zendesk customers can turn generative AI features on gradually through the Admin Center, and agents can edit or reject AI-generated replies before sending.

- Security: AI must be resilient to threats, manipulation, and harmful behavior. Zendesk has built-in safeguards like prompt shielding, RAG grounding, and isolated test environments to keep AI responses accurate, secure, and compliant.

- Privacy: Data should always be protected, owned, and used responsibly. With Advanced Data Privacy and Protection (ADPP), Zendesk makes it possible to redact or delete sensitive data. Self-managed encryption keys and customizable consent flows add further control.

- Grounded knowledge: AI must be tied to accurate, real-time knowledge sources to deliver trustworthy results. Zendesk AI uses retrieval-augmented generation (RAG) to ground answers in each customer’s own knowledge base, ensuring responses reflect what the business truly supports.

Closing the trust gap

With tools like Quality Assurance to monitor AI performance, AI agents that follow your procedures, and ADPP for advanced privacy controls, Zendesk equips companies to adopt AI responsibly—while reinforcing the trust that makes adoption sustainable.

Because the future of AI-powered service won’t be defined by how powerful the tech is, but by how much customers trust it to deliver.